RedPajama replicates LLaMA dataset to build open source, state-of

$ 17.00 · 4.8 (256) · In stock

RedPajama, which creates fully open-source large language models, has released a 1.2 trillion token dataset following the LLaMA recipe.

From ChatGPT to LLaMA to RedPajama: I'm Switching My Interest to

Timeline of computing 2020–present - Wikipedia

Introducing MPT-7B: A New Standard for Open-Source, Commercially

2023 GPT4All Technical Report, PDF, Data

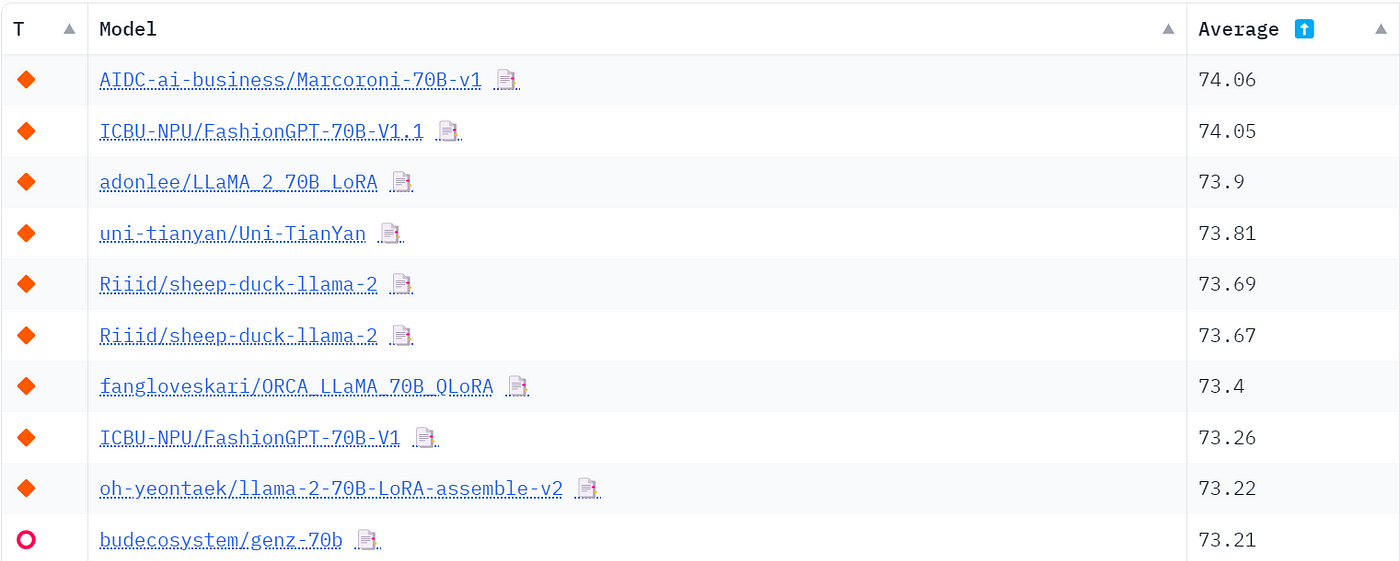

List of Open Sourced Fine-Tuned Large Language Models (LLM)

Open-Sourced Training Datasets for Large Language Models (LLMs)

S_04. Challenges and Applications of LLMs - Deep Learning Bible

![]()

🎮 Replica News

François Lafond (@FLCompRes) / X