DeepSpeed Compression: A composable library for extreme

$ 10.99 · 5 (562) · In stock

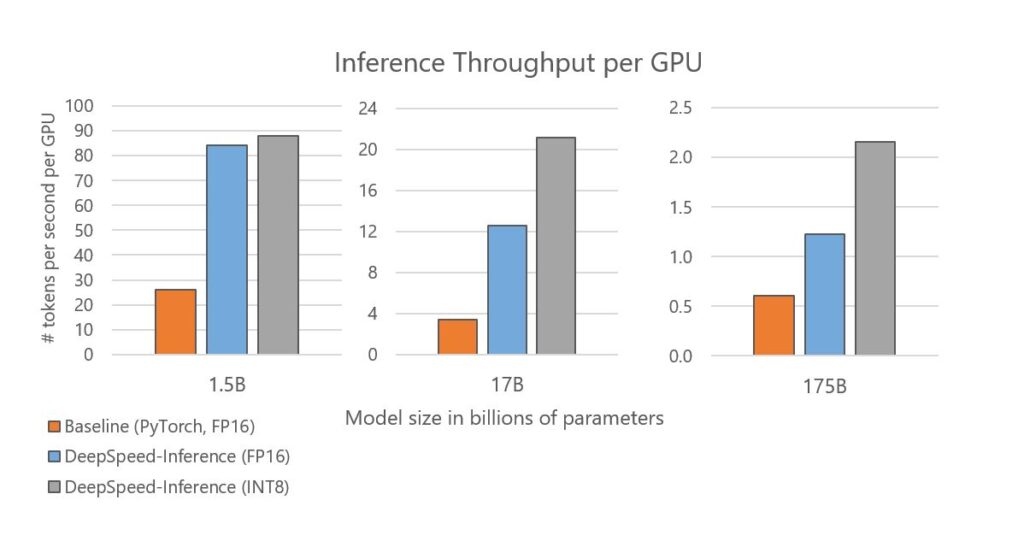

Large-scale models are revolutionizing deep learning and AI research, driving major improvements in language understanding, generating creative texts, multi-lingual translation and many more. But despite their remarkable capabilities, the models’ large size creates latency and cost constraints that hinder the deployment of applications on top of them. In particular, increased inference time and memory consumption […]

DeepSpeed - Microsoft Research: Timeline

GitHub - microsoft/DeepSpeed: DeepSpeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective.

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

PDF] DeepSpeed- Inference: Enabling Efficient Inference of Transformer Models at Unprecedented Scale

This AI newsletter is all you need #6

AI at Scale: News & features - Microsoft Research

Xiaoxia(Shirley) Wu (@XiaoxiaWShirley) / X

Gioele Crispo on LinkedIn: What an incredible achievement! It's fascinating to see how a simple…

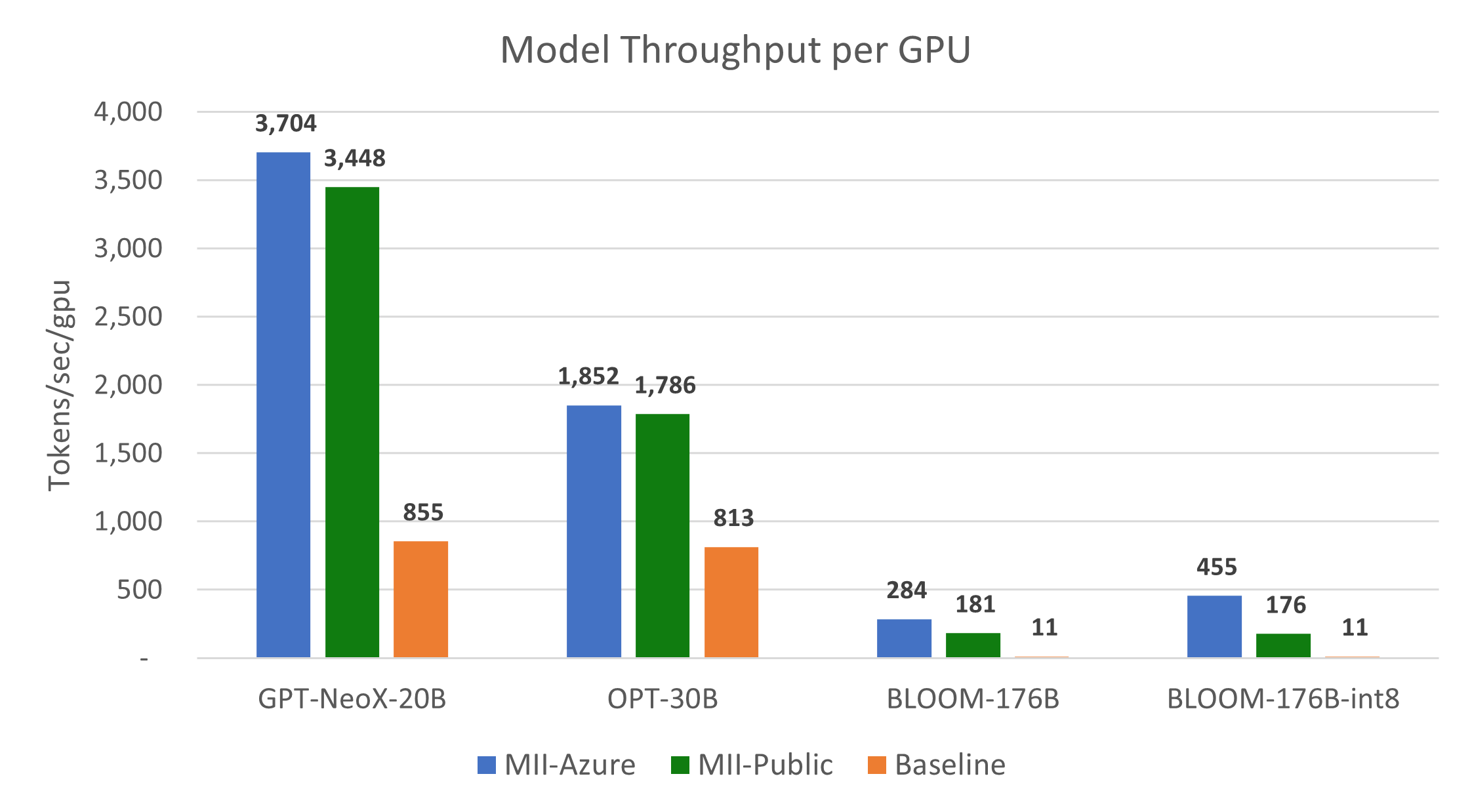

DeepSpeed-MII: instant speedup on 24,000+ open-source DL models with up to 40x cheaper inference - DeepSpeed

GitHub - microsoft/DeepSpeed: DeepSpeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective.

GitHub - microsoft/DeepSpeed: DeepSpeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective.

Xiaoxia(Shirley) Wu (@XiaoxiaWShirley) / X